LGWR: Primary database is in MAXIMUM AVAILABILITY mode | ORA-16072: a minimum of one standby database destination is required

July 29, 2019 Leave a comment

Problem:

One of our customer cloned database from a DG environment to a different host and tried to open it as a standalone database. Controlfile and datafiles still considered the database in maximum availability mode.

Errors after trying to open the database:

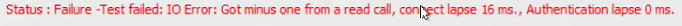

LGWR: Primary database is in MAXIMUM AVAILABILITY mode LGWR: Destination LOG_ARCHIVE_DEST_1 is not serviced by LGWR LGWR: Minimum of 1 LGWR standby database required Thu Jul 18 18:43:14 2019 Errors in file /u01/app/oracle/diag/rdbms/orcl/orcl2/trace/orcl2_lgwr_39735_39805.trc: ORA-16072: a minimum of one standby database destination is required

Solution:

SQL> startup mount; SQL> alter database set standby database to maximize performance; SQL> shutdown immediate; $ srvctl start database -d orcl