OPATCHAUTO-72050: System instance creation failed, cluvfy fails Verifying ‘/tmp/’ …FAILED (PRVF-7546)

March 20, 2022 Leave a comment

Problem:

While running cluvfy we get the following error:

[grid@rac1 grid]$ ./runcluvfy.sh stage -pre crsinst -n rac1,rac2 -verbose ... Failures were encountered during execution of CVU verification request "stage -pre crsinst". ... Verifying '/tmp/' ...FAILED Cannot run program "/usr/bin/scp": error=13, Permission denied

You should not continue patching when runcluvfy fails, strongly recommended to solve all failed items and only after that run opatchauto. But if you do insist to run patching then you will get the following:

[root@rac1 33509923]# /u01/app/19.3.0/grid/OPatch/opatchauto apply /u01/app/patchinstall/33509923 -oh /u01/app/19.3.0/grid OPatchauto session is initiated at Sun Mar 20 06:27:17 2022 System initialization log file is /u01/app/19.3.0/grid/cfgtoollogs/opatchautodb/systemconfig2022-03-20_06-27-42AM.log. ... OPATCHAUTO-72050: System instance creation failed. OPATCHAUTO-72050: Failed while retrieving system information. OPATCHAUTO-72050: Please check log file for more details.

Reason:

The issue in our case was that /usr/bin/scp did not have correct permissions. It had 600 while it should have had 755. Why this happened? Don’t really know… it should not be happening.

Solution:

Set correct permissions on both nodes for scp binary using the following way:

# chmod 755 /bin/scp

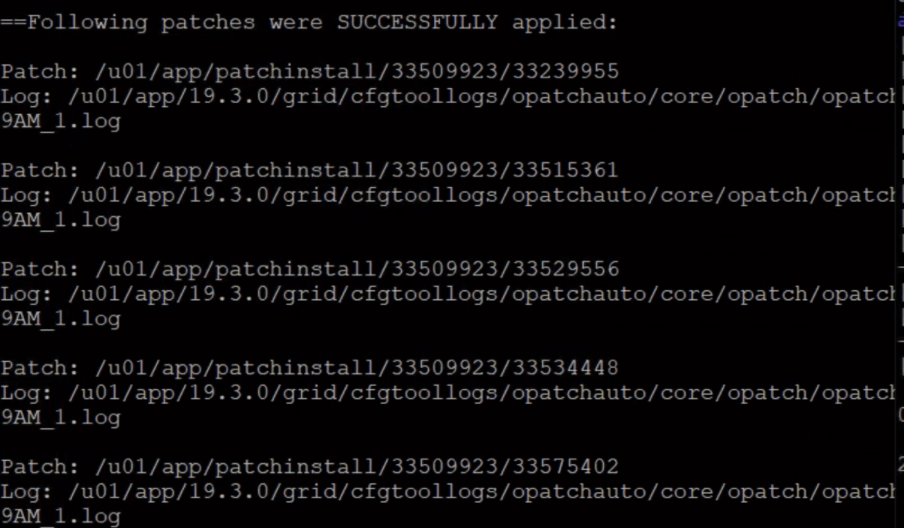

Retry patching, in our case it was successful: